DS-201d · Module 2

Comparing Outputs: Artifacts vs. Code

3 min read

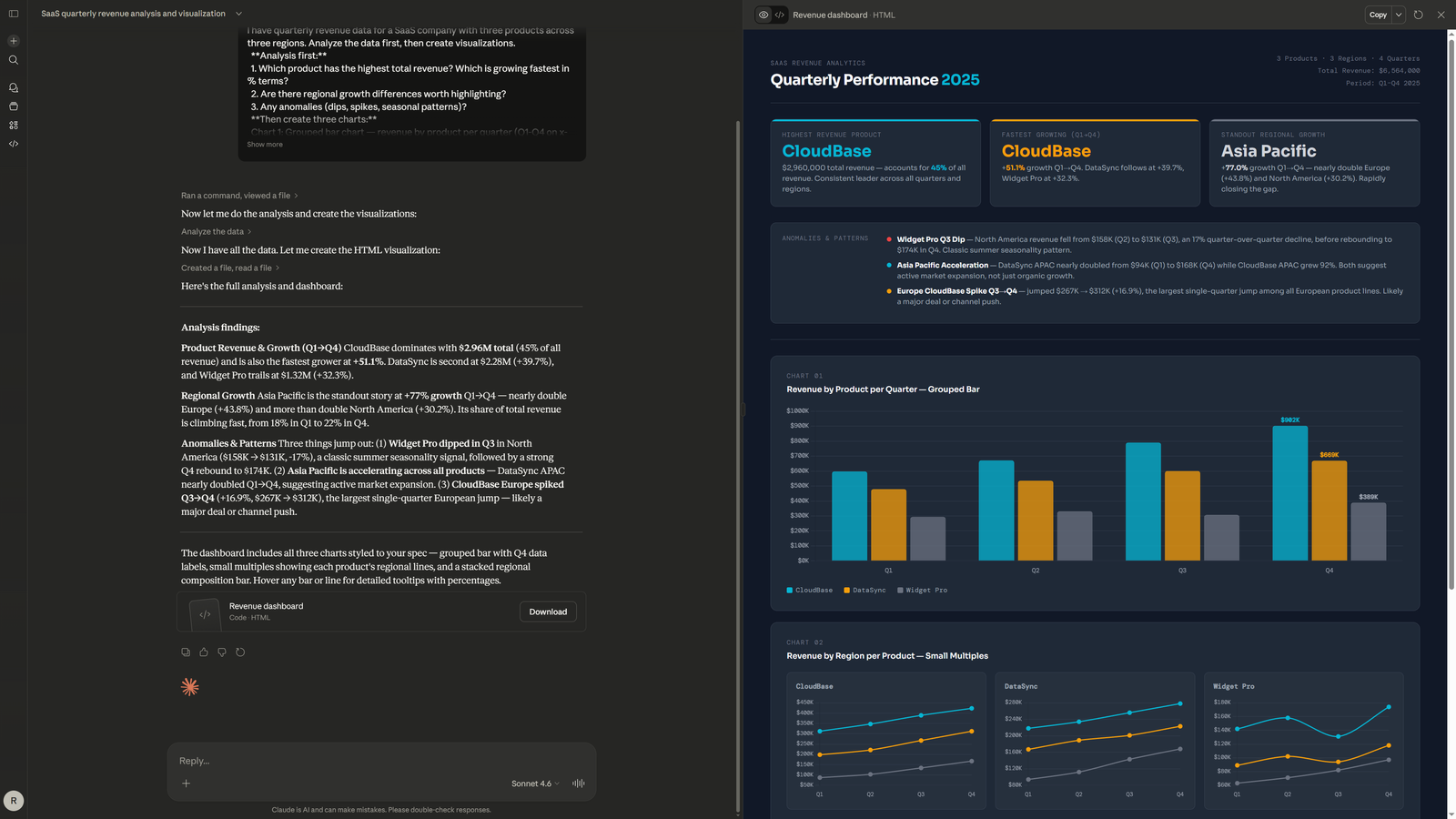

You have now seen the same data visualized through two completely different workflows. Claude gave you an interactive dashboard in seconds — rendered, styled, and ready to screenshot. ChatGPT gave you a Python script that, after setup and execution, produced high-quality static PNGs. Neither approach is universally better. The right choice depends on what you need the visualization for.

- Evaluation Criterion 1: Time to First Visual Claude wins decisively. Attach data, submit prompt, see dashboard — all within 30 seconds. ChatGPT's workflow adds copy-paste, file save, environment setup, and script execution. If you need to see your data visualized right now, Claude is the tool.

- Evaluation Criterion 2: Output Ownership ChatGPT wins here. You get a .py file you can modify, commit to git, run on a server, or integrate into a data pipeline. Claude's Artifacts live inside the Claude UI — you can screenshot them, but you cannot run them independently or schedule them for automated reporting.

- Evaluation Criterion 3: Iteration Speed Claude wins for visual iteration. "Move the legend," "add a trend line," "change the color" — each adjustment renders in seconds. ChatGPT iteration means re-running the script each time, checking the output PNGs, and going back for another round. But ChatGPT's code-level iteration gives you finer control when you need it.

- Evaluation Criterion 4: Analytical Depth Claude wins. The written analysis alongside the Artifact provides context that ChatGPT's code comments do not match. Claude explains why CloudBase dominates, identifies the Widget Pro Q3 anomaly, and frames the regional growth story. ChatGPT's script has code comments, not analytical reasoning.